Blogs

- Home

- Blogs

The necessity for numerous internal applications that needed to access the numerous different ERP solutions that were popular in the 1990s gave rise to Enterprise Application Integration (EAI). Customer relationship management (CRM) systems like Clarify and Siebel, as well as ERP programmes like SAP, PeopleSoft, and JD Edwards, quickly turned into enterprise data silos, making it crucial to reuse the information and features in those systems. EAI is an architectural strategy for integrating various software services and data. EAI aims to streamline and automate business procedures without necessitating significant modifications to the current applications and data models.

Different departments with distinct areas of emphasis, interests, and experience are created as a business expands in size. Partitioning is necessary to maintain manageable team sizes, encourage hiring of the finest candidates for a specific set of duties, and provide sufficient autonomy to complete the work. While the need to divide is natural, all departments must cooperate through exchanging data and business processes in order to achieve a common objective. Business processes evolve naturally over time; some are specific to one area while others are focused on the entire company. Applications for software are created or bought to assist business processes. Organizations may wind up using a variety of applications and systems, some of which may overlap, clash, or be incompatible. These apps could employ various supporting databases, different operating systems, or different programming languages. Due in part to technological incompatibilities and the exorbitant costs of staff cross-training in the various systems, it can be exceedingly challenging to integrate these separate applications and services.

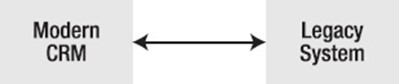

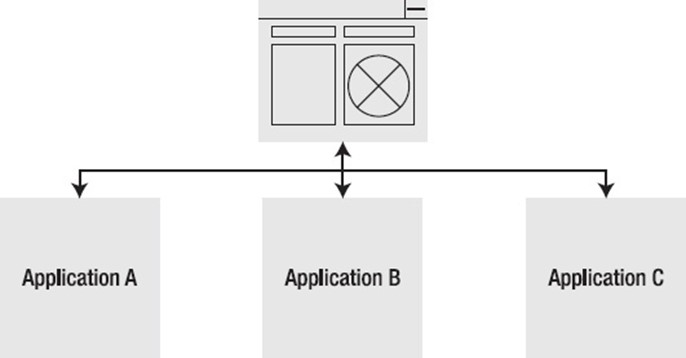

The need to share data and business processes among already-existing apps inside a company is the primary motivator for EAI. Typically, a business will invest in a new CRM system (see Figure 1–1). This system is a significant improvement over the previous homegrown mainframe system, which has long served the organisation well. It is frequently necessary to use both the new and the old systems at once, mainly because some of the features of the old system are still not fully implemented in the new one. In this case, new customer data is added to the CRM, but the legacy system also needs to be updated to reflect the new information in order to support the necessary enterprise business operations. It's also possible that the CRM will need to use some of the old system's features.

Integration with Modern CRM and Legacy System, Figure 1-1

Many times, businesses desire to use the best available software. Despite the fact that a major corporation can satisfy its demands by purchasing software applications from a single vendor, most businesses prefer to employ the programmes that are deemed to be the finest for each function. There are various causes for this: Sometimes specialising produces better outcomes, and it's always prudent to avoid putting all of your business with one provider. If the seller goes out of business, support can become a problem. Additionally, it's possible that a custom application will be the only solution to the business's requirements. Even with contemporary systems, there might not be a universal method of communication that would function with the software from every vendor. Different operating systems, including Linux, Mac, Windows, Solaris, HP-UX, and IBM-AIX, can run business applications. Various databases, including Oracle, DB2, SQL Server, Sybase, RDB, and Informix, may be the foundation for these applications. Different programming languages, including Java, C, C++,.NET, Cobol, and ABAP, are used to create applications. Additionally, it's important to remember the old mainframe systems, such as those made by IBM and DEC.

A management system that is unable to operate in tandem with other, relevant management systems is referred to as an information silo. Even internally developed applications have a vertical communication emphasis and an inward orientation. Information silos are extremely common in the majority of enterprises, despite the current drive toward open standards and the desire to take advantage of the power of the Internet. They are typically brought on by a lack of coordination and shared objectives within organisational departments. When examining the majority of the current open source frameworks, vertical database-backed web applications are the main focus. Less horizontal connectivity is possible between these applications. This might be another motivator or only a sign of the spread of knowledge silos. Information silos make it difficult to interoperate corporate processes and prohibit an organisation from using all of its departments to work toward a single objective. Additionally, it stops the programmes from utilising the internet's full potential. Another issue that EAI aims to solve is integration across information silos.

A chance to enhance contact between various businesses occurred thanks to the Internet's strength and potential. Business-to-business (B2B) systems were developed after the original emphasis on business-to-consumer (B2C) applications. The sharing of information electronically across firms has the potential to lower costs, boost productivity, and eradicate human error. Between two computer systems or business partners, the electronic data exchange (EDI) standard was developed to support electronic commerce transactions. In EDI, a number of electronic messages are transferred automatically between computer systems. The communication must adhere to well specified agreements. The primary goal of EDI was to establish a uniform message format for B2B communication. Even today, EDI is still widely utilised, particularly in the financial sector.

Despite seeming promising, EDI presented a number of problems due to the absence of a universal message format. There are no clear looping declarations in EDI. The difficulty of retrieving the right information is made more difficult by the separation of structure and data. EDI frequently necessitates proprietary software and lacks a standardised parsing API.

Standards are also used in other businesses to help partners communicate. For instance, the secure sharing of patient health information and care is described by HL7 in the US healthcare business.

XML, on the other hand, was developed as a general-purpose data format that can be easily sent across the Internet's underlying protocols, such as HTTP. Widespread use has made XML the accepted format for exchanging messages. The necessity to expose business processes through HTTP communication led to the development of web services. The expansion of online services is evidence of how crucial integration is.

The applications of today don't have clients with amber screens or run on mainframes. They are online programmes. Five years ago, the term "web application" would have referred to a programme that could be accessed using a web browser; today, it refers to a programme that maintains your data centrally and exposes its feature sets in a variety of ways. Today's users connect with these programmes and with one another more and more through their tablet computers, mobile devices, and regular tools like chat, email, news feeds, and social networks like Facebook or Twitter. The user no longer has to be at a desk and logged into a website, thus developers nowadays cannot afford to assume this. She must go to the user's location and facilitate the application's use for those users as much as feasible. The most significant improvements in application development over the past five years have been integration, which is what we are talking about here.

The success rate of EAI implementations is not the best. Numerous reports have suggested that the majority of EAI projects fail. And management difficulties rather than software or technical problems are typically to blame for failures. A look at the technical challenges with EAI is necessary before moving on to the managerial issues.

Commercial suppliers with a variety of proprietary solutions dominated EAI at first. The software and tools of the vendor had to be thoroughly understood before an EAI solution could be implemented. Later in this chapter, a number of the commercial goods and methods will be covered. Up until recently, there were no functional open source frameworks. Although it was possible to integrate open source, the outcomes lacked the management and monitoring capabilities necessary for a production environment. Additionally, the business logic and adapters for many of the existing corporate systems had to be extensively custom-coded for these solutions. One open source programme that has matured and is prepared to meet your integration needs is Spring Integration. Chapter 2 discusses alternate open source options.

Usually, integration calls for interacting with a variety of technological and commercial areas. Siebel, SAP, and PeopleSoft are all options for customer service and human resources, respectively. Different technologies, setups, and external communication protocols are used by each of these applications. Without understanding the underlying endpoint technology, it is typically exceedingly challenging to integrate with an application or system. Although adapter technology helps to overcome some of these challenges, it is frequently necessary to configure and modify the software of the programmes in order for them to merge. Different systems expose various interaction options: PeopleSoft has business objects, Siebel has business components, and SAP has business application programming interface (BAPI). These systems are formidable, but typically call for a system integrator to have a working knowledge of both the business domain and the applications that need to be integrated. This can be a real difficulty, depending on how many integration points there are.

The destination application frequently cannot be modified or changed to allow for integration. The application might be a for-profit item, in which case any modifications would nullify the warranty and support policy. Or the application might be an old one, making changes challenging. There aren't many integration standards out there, despite how important they are. Although there has been a lot of hoopla surrounding XML and web services, these technologies are still rather fragmented. The specifications are growing more and more inconsistent, despite the fact that there are various standards organisations in the globe today. Take a look at how many Web Services (WS-*) standards there are and how many requests there are for equivalent Java specifications (JSRs). The suppliers with their own agendas often control the standards committees. On the plus side, the open-source community is increasingly driving the adoption of standards.

Applications of today frequently consist of numerous moving pieces that are connected through unidentified networks and protocols. Assuming that the client and server would always be accessible is naive. Since messages are queued until both systems can handle them, employing messaging to establish asynchronous communication between two systems not only allows you to decouple the producer from uptime but also speeds up the performance of each component since they are no longer dependent on one another. Similar to this, independent system components can be expanded to reach capacity.

The technical problems are the easiest to resolve, as they are with all business concerns. What's challenging is dealing with interpersonal concerns. With EAI, nothing is different. Given that it affects the entire company, adopting it can even be more challenging than establishing a vertical application. EAI implementations frequently involve partners, interact with customers, and traverse organisational boundaries. When internal business politics enter the picture and cause straightforward questions to take months to answer, this is when the real fun starts. The software standards and procedures of one multinational corporation varied depending on which side of the Atlantic the division was located.

Integrations may involve a variety of processes, managerial techniques, and political structures inside an organisation. For different regions, there may be different reasons for implementing integration. The desire to share data between two areas can vary often. A consensus on the data to be shared, its format, and the best way to map the various representations and interpretations between the domains is necessary for a successful integration. This is not always simple or possible, and sacrifices frequently need to be made.

Various elements at play in an organisation may dictate when integration will be implemented. While one region may be working on another project and lack enthusiasm for the integration effort, the other may be eager to have the data available as soon as feasible. Since integration with an application typically involves access to and support from the business owners, this needs to be addressed.

Security is a constant concern since data may be proprietary due to privacy concerns or because it has commercial worth. Access to this data is necessary for integration, but getting the necessary authorization is not always simple. The success of the implementation, where security requirements must be met, depends on this access.

Communication between computer systems, business units, and information technology departments are frequently necessary for a successful integration. Integration activities have broad ramifications for the business because of their scope. A company can lose millions of dollars due to misdirected payments, missing orders, and irate consumers due to integration processes that go awry. In the end, though, getting along with everyone—from business owners to IT staff—is crucial and frequently more significant than the technical difficulties associated with an integration. Although technical barriers are reduced by integration frameworks like Spring Integration, you must also take commercial motives into consideration.

Four methods of integration have been used historically: file transfer, database sharing, service leveraging, and asynchronous messaging. How these methods effect coupling in your architecture is one way to examine them. There are primarily three forms of coupling:

Spatial coupling (communication): The term "spatial coupling" refers to the need for a producer to understand how to communicate with another producer and how to handle mistake scenarios in the communication. For instance, a server-side error during an RPC operation is an example of spatial coupling.

Temporal coupling, also known as buffering, is the need for a producer to be aware of and accessible to a consumer in order to share data. Buffering is used in a decoupled system to allow messages to be sent even when the consumer isn't available to receive them.

Logical coupling, also known as routing, is the need for a producer to understand how to connect with the consumer. Introducing a central, shared location where both parties may communicate data is one method to remedy issue. And since the client only cares about the final message payload, it is clueless if a producer decides to alter (change IP, install a firewall, etc.) or decide to add additional stages before publishing messages.

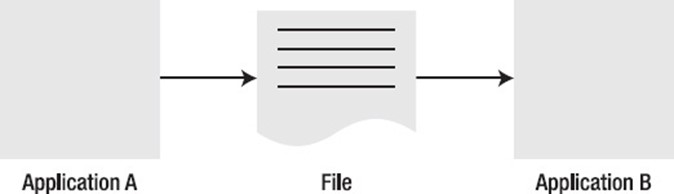

Typically, using a file is the first method that springs to mind when transferring data (see Figure 1–2). When sharing data between two separate programmes, one system can create a file containing the relevant information. The other system can mount or poll for this file in an established, well-known directory. This directory may naturally be located anywhere, including an FTPS share, a SAN-based file system mount, an SFTP directory, or a clustered file system like Hadoop's HDFS or VMware's VMFS. The other system can process the file for the data once it is accessible. When using file transfer, there needs to be agreement on the file type to use and the frequency of publishing and consuming the files. The management of all the formats and files, as well as making sure that no files are missed, is a significant problem with this method. Additionally, there is always a gap in time between the frequency of file production and consumption. This might interfere with synchronisation. Because neither the producer nor the consumer must be present for the integration to take place, file transfer integrations are temporally decoupled. The systems in file transfer integration are also logically separated because they just need to be aware of the shared mount and not one another.

File transfer strategy, shown in Figure 1-2

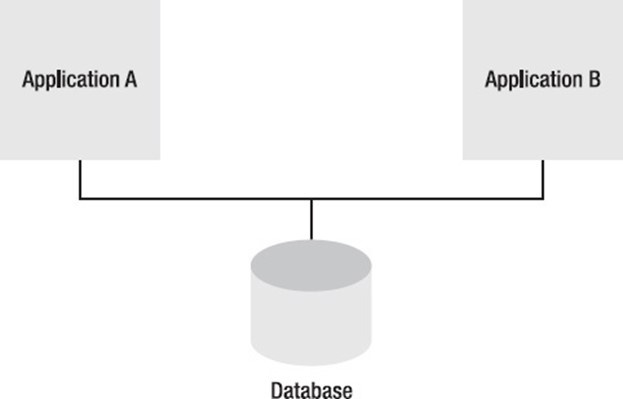

Utilizing the same data source or shared database is the best way to share data (see Figure 1–3). It's as easy to integrate two systems as it is to link two tables. However, employing a shared database for integration leads to a number of problems. First, it is challenging to develop an uniform schema that would satisfy the requirements of the many applications. By using a shared schema, it is possible to establish interdependence between two systems that could have various needs and timetables. Because of locking conflicts and network slowness when distributing across different sites, using a single dataset restricts the possibility for scaling. Shared databases connect all participating systems to a well-known schema (the database table), though the various systems are temporally decoupled; as long as the database is accessible, one system does not need to be available for another system to communicate a change. Systems in a shared database are conceptually separated from one another because only the shared database has to be aware of them.

Picture 1-3 Shared Database Methodology

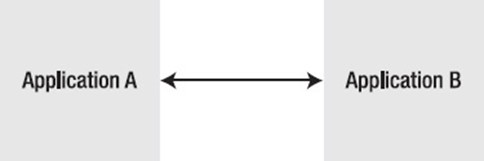

One option to expose this functionality if information or a process needs to be shared within an organisation is through a remote service (see Figure 1–4). Functionality can be made available to the rest of the company, for instance, through an EJB service or a SOAP service. By encapsulating the implementation within a service, it is possible for the various applications to modify the underlying implementation without having an impact on the integration solution, provided that the service interface remains constant. The important point to keep in mind regarding a service is that the integration is synchronous, meaning that both the client and the service must be accessible and aware of one another for integration to take place.

Remote Service Call Approach, Figure 1-4

The difficulty with integration is allowing applications to share functionality and data in real time without too tying the systems and raising reliability concerns throughout application development and execution. File transmission has inherent performance problems but is effective for separating the many programmes. A shared database binds all programmes to a single database while guaranteeing timely data access. Furthermore, it prevents external programmes from sharing their functional behaviour. Although a remote service is an option, there are numerous problems with expanding a single application model for integration. The process of working on a single application could evolve into dispersed development. Service calls appear to be local calls, but they must include all the functionality necessary for network traffic. They are more vulnerable to failure and are slower. One failed application has the potential to bring down the entire business. Something resembling file transfer is required, but without the performance problems.

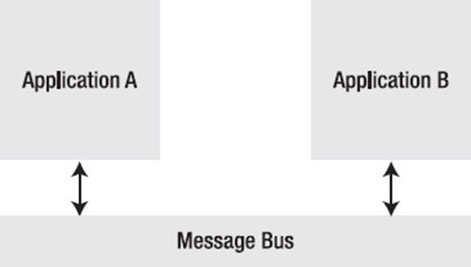

A strategy called messaging uses an adaptable format to regularly, instantly, reliably, and asynchronously transfer data packets. Through the use of messages, two connected systems can share data and command behaviour (see Figure 1–5). The well-known hub-and-spoke Java messaging service (JMS) architecture serves as an illustration of this. It is not necessary for both systems to be operational at the same time in order to send a message. Additionally, employing an asynchronous method compels the developers to consider the challenges of collaborating with remote applications. It is possible for messages to change in transit without the sender or the recipient being aware of the change. Using messaging, it is possible to communicate both processes and data. A message may be broadcast to numerous receivers or targeted at a specific receiver among many. Messaging satisfies the requirements for expansion and scalability in integration.

Messaging Approach, Fig. 1-5

The majority of systems are driven by events. Instead of following a set pattern, systems respond to changes and exciting events that occur within the company. These occurrences are communicated as messages that include details about the occurrence and instructions for processing it. By separating the producer and the user through messaging, they are no longer dependent on one another for information like as availability, speed, or public interfaces. One releases a message as soon as feasible and then lets the recipient take its time processing it.

There are two ways to communicate data between systems: either the client must request it (a pull system), or the distant system must send it when anything has changed (a push system).

Traditionally, data has been gathered using a pull technique. Pull-oriented communication is exemplified by making a web service call, a remote procedure call, or a database query. Integrations are frequently point-to-point, meaning that to get a result, any interested party must understand how to communicate with any other system directly. Maintaining these connections might easily become exceedingly onerous in architectures with numerous systems. The number of connections feasible for suitable communicating devices is defined by Metcalfe's law, which bears Robert Metcalf's name. Robert Metcalf was a co-inventor of Ethernet and the founder of 3Com. It can be used to determine the number of distinct connections needed for any quantity of system partners who must communicate with one another via point-to-point technology. The results of the formula n(n 1)/2, where n is the number of connected nodes, might be quite frightening! One connection is needed for every two nodes or partners in an integration (2(2-1)/2 =1), ten connections are needed for every five nodes (5(5-1)/2 =10), and forty-five connections are needed for every ten nodes (10(10-1)/2 =45)!

Systems that use pull have synchronisation gaps. A system could alter, but consumers of that event wouldn't be aware of it until the following poll. Clients may have data that is up to 10 seconds out of sync, assuming (for example) that a poll takes place every ten seconds. This isn't a huge concern in some systems (social networking "status" updates, for instance). This delay is unpleasant for other things (like stock market trading).

A software design paradigm called event driven architecture (EDA) encourages the creation, detection, and/or consumption of events as messages. EDA is essentially an architecture that allows for the transmission of events between services and loosely linked software components. Both event publishers and consumers, often known as subscribers, make up an event-driven system. Because event-driven systems are by nature designed to deal with erratic and asynchronous environments, building applications and systems around an event-based architecture enables these applications and systems to be more responsive. Figure 1-6 displays an easy-to-understand illustration of an event-driven architecture.

Event-Driven Architecture, Figure 1-6 (EDA)

An way to creating a system that can sustain huge concurrency without experiencing many of the problems associated with utilising a conventional thread and event-based approach is called staged event-driven architecture (SEDA). The fundamental idea is to divide the application logic into several phases and connect them with event queues. By increasing the stage's thread count, each stage can be trained to handle more load (increasing concurrency). Readers are urged to read Matt Welsh, David Culler, and Eric Brewer's work, SEDA: An Architecture for Well-Conditioned, Scalable Internet Services, for additional details on SEDA. Curiously, the CAP theorem was also established by Eric Brewer.

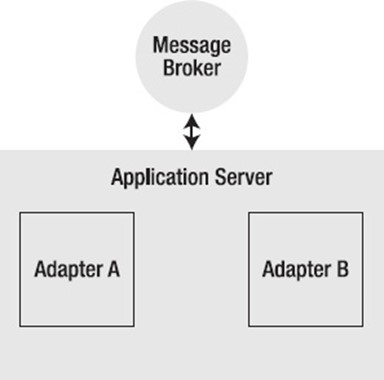

Since its inception, which was intended to deal with data and business process sharing across the company, EAI designs have gone through several stages of evolution. Older integration methods included platform-neutral RPC like CORBA and Oracle's (formerly BEA's) Tuxedo. EAI switched to message brokers to address the previously mentioned problems with tight coupling and real-time access to data operations. An adaptor was used to establish a connection with the various applications in order to translate the application protocol into a format that the message broker could understand. For the purpose of providing basic application support, such as configuration and lifecycle management, the adapter software was typically run inside of some form of container (see Figure 1–7).

Traditional EAI Adapter and Broker Architecture, Figure 1-7

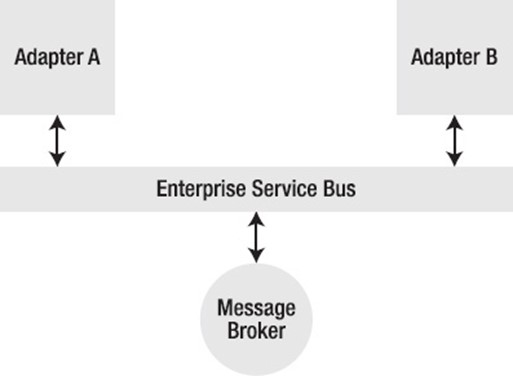

The development of EDI and the exchange of documents via email, FTPS/SFTP, HTTP, and other methods were all driven by the requirement for B2B integration at the same time. To meet these and other requirements, application server development came next. The application servers and adapter containers eventually combined (see Figure 1–8).

Application server architecture, seen in Figure 1-8

It appears messaging technology has returned after a brief reversal to remote procedure calls with service-oriented architecture (SOA). The architectures of today require asynchronous, loosely linked, stateless, and horizontally scalable service layers, which messaging is best suited for, as well as standard data interchange techniques (for which XML is excellent). The enterprise service bus was created as a result of the movement toward open, standards-based integrations (ESB). This is a compact adapter container with open-standards-based message routing capabilities (see Figure 1–9).

Enterprise Service Bus (ESB) Architecture, Figure 1-9

Although it offers ready-to-use routing and integration technologies, an ESB is still a server and still promotes the use of separate servers. Many individuals need something even more lightweight—something that can be incorporated or utilised independently. Integration frameworks like Apache Camel and Spring Integration have been quite effective at answering this request. Even traditional ESB suppliers like MuleSoft are making an increasing effort to leverage frameworks to make their adapters usable independently of the broker. Time will tell if this strategy is successful.

Costly proprietary systems that needed domain expertise to implement have previously dominated EAI solutions. These have traditionally been the most effective methods for resolving integration issues. The sections that follow examine a few of the more well-known integration products.

Software AG now offers a set of integration products called webMethods. The Integration Server and message broker are webMethods' primary parts. The current application server is the ancestor of the integration server. The Integration Server was created to assist B2B integration and is essentially an HTTP server on steroids. Integration Server offers a platform for business integration between firms over the Internet. It supports EDI and custom XML messages in addition to its own process flow language. WebMethods expanded its product line by adding an enterprise integration platform through the acquisition of Active Software. An enterprise-grade message broker and a collection of adapters from Active Software's product line facilitate integration with all the main ERP and legacy systems. Additional purchases enhanced the platform's monitoring and business process support capabilities. A set of visual tools offered by commercial products like webMethods make the deployment of an integration solution easier and faster.

Another significant player in the marketplace for enterprise integration systems is TIBCO. With messaging functionality from TIB/Rendezvous and an integration server package named ActiveIntegration, TIBCO has software that is comparable to webMethods'. With a variety of application adapters, TIBCO has been utilised in the financial services, telecommunications, electronic commerce, transportation, manufacturing, and energy sectors. TIBCO acquires Spotfire to enter the analytics and next-generation business intelligence sectors, DataSynapse to enter the grid and cloud computing markets, and Netrics to enter the enterprise data matching software market.

MuleSoft is a vendor that provides an integration platform to help businesses connect data, applications and devices across on-premises and cloud computing environments. The company's platform, called Anypoint Platform, includes various tools to develop, manage and test application programming interfaces (APIs), which support these connections. MuleSoft, in May 2018, was acquired by Salesforce, a software as a service (SaaS) provider. Salesforce now uses MuleSoft technology as part of its Salesforce Integration Cloud.

These are Vitria's two primary software product lines.

BusinessWare is an integration service provided by Vitria. It features a business process management (BPM) platform that enables customers to perform general business process management, enterprise application integration, and B2B integration.

M3O: This enables users to track processes across all internal business operations and respond accordingly. It combines web technology, BPM, and business activity monitoring (BAM).

IBM's message-oriented middleware (MOM) solution is called MQSeries. MQSeries provides a comprehensive integration solution when combined with IBM WebSphere and auxiliary technologies. This consists of a collection of application adapters as well as visual development and setup tools.

When SonicMQ first began, it was a JMS API-implementing messaging broker. Sonic ESB, a product that supports adapters and message routing setups, was later included. A BPEL process engine server and visual tool support named Sonic Workbench completed the package, completing the integration solution suite.

Applications for enterprise and business-to-business (B2B) integration are also offered by Axway. The solutions provided by Axway include a customizable B2B integration platform, analytics, services, and tailored applications.

The message router and application server from BEA AquaLogic and the message broker from WebLogic make up the majority of Oracle ESB. This is an integration package that contains visual tools, along with various other Oracle products.

Microsoft's enterprise integration product is called BizTalk. However, its limited product selection and ability to only function on Windows have stopped it from having a more widespread market penetration.

Integrating computer systems, businesses, and people broadly speaking. Implementing integrations for enterprise customers typically follows one of three main patterns: data synchronisation, online portals, and workflow system. The bulk of requests for integration implementation fall within one of these three types.

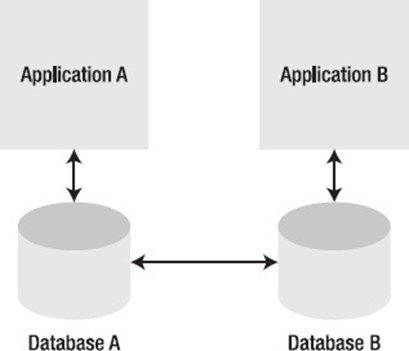

When multiple business processes in an organisation need to access the same data, data synchronization—the most frequently requested sort of integration—is utilised. Since this is typically a temporary solution when a façade pattern may be employed in its place, it is almost like an anti-pattern. A customer's address, for instance, might be utilised in a system for managing customer relations, billing the customer, or shipping a product to them. Each system typically has its own data store for client information for a number of factors, such as performance and particular domain models. Each system must update its perception of the client information whenever, for instance, the customer changes his address. Implementing a data synchronisation integration pattern could help achieve this (see Figure 1–10).

Each application must use the same database vendor and schema structure in order for data replication to function. When each application has its own technology stack, this is typically not the case. The data can also be exported as files and then reimported into the other system. Since it can be challenging to identify which data has changed, this method can be laborious. The data frequently transfer between the systems. Additionally, the frequency of this synchronisation process will affect how timely the data is.

Message-based systems are used to relocate the data records inside the messages as part of the typical integration method to data synchronisation. To know when to send the data to the other systems, a mechanism is required to detect when a record is generated or altered, such as a database trigger or a hook or façade into the application.

Data Synchronization Pattern, Figure 1-10

Business owners and executives frequently need data from many systems in order to provide particular answers to inquiries or to obtain a broad overview of the entire company. It would be preferable to obtain this information from a single source rather than having to seek through numerous programmes. For instance, if a customer service representative has to check the status of an order, they may need to access data from various sources, including the web application that processed the transaction and a third-party programme that provided support for the fulfilment system. Or perhaps the business executive would want to use a single web page rather than multiple programmes to track the actions of various regions. Without requiring the user to log in to each programme supporting the various business sectors, web portals combine data from numerous sources into a single display.

In recent years, portal applications have proliferated, enabling users to customise a web page made up of various frames, or portlets, offering a composite view (see Figure 1–11). Additionally, the majority of portal frameworks permit some degree of interaction between the various frames. Additionally, integration frameworks enable the fusion of data from several sources into a single model, including the fusion of data based on shared characteristics like customer or order IDs.

Pattern of a web portal in Figure 1-11

The successful completion of a business process may involve both automatic agents and human actors, and it may cross numerous business areas and systems within a company. The loan approval procedure serves as the paradigmatic example of a workflow because it includes both system and human actors and involves a number of steps. The scenario is as follows: a consumer asks a bank for a loan. The bank representative enters the request into the system, where it will first go through fundamental checks to see if the requester has excellent credit, the necessary paperwork, etc. The loan is authorised if everything checks up and the amount is one that is considered to be low risk. If the loan amount is high risk, it necessitates additional audits. Someone from the risk assessment department must manually review the request and determine if the loan should be accepted on a case-by-case basis. Finally, a letter informing the requester of the request's status will be printed and sent to him (approved or not approved). In this instance, the process required a worker with specific authority to analyse the request in order for it to be completed after passing through at least two different systems.

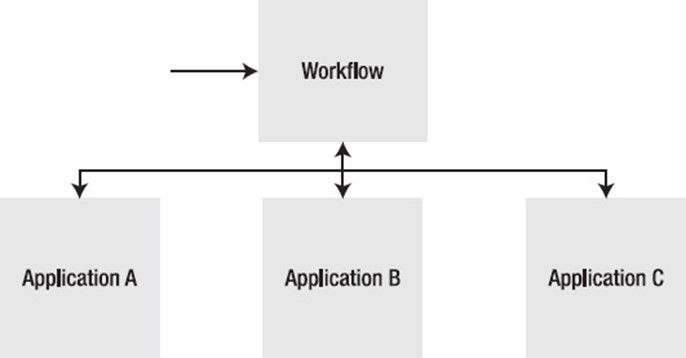

A variety of workflow engines, including those that use BPEL and BPMN to express process flows, are available. Without worrying about the actual implementation, the process flow can be planned at a high level. Later, the procedure can be put into practise by utilising an integration framework to integrate the workflow process with various applications (see Figure 1–12).

Flowchart of the Workflow

The requirement for an open-source, uncomplicated integration framework that makes use of the widely used Spring Framework led to the creation of the Spring Integration Framework. In order to enable the common integration patterns and further the Spring Framework's existing enterprise integration functionality, Spring Integration offers an extension of the plain old Java object (POJO) programming model. The Spring Framework is currently the most frequently adopted framework in use in businesses. Messages, channels, and endpoints are some of the basic integration idioms that Spring Integration works with. Through Spring Integration's adapter structure, it interacts with external systems and enables communications within Spring-based applications. Over and above Spring's current support for remote method invocation, messaging, scheduling, and other features, the adapter framework offers a higher degree of abstraction. Given that it utilises the same development paradigm and idioms as the Spring Framework, Spring Integration will be simple for developers to learn.

The fundamentals of Enterprise Application Integration (EAI) and integration in general have been discussed in this chapter. The incentives and difficulties characteristic of an integration solution have been addressed. The fundamental methods for putting integration into practice—file transfer, database sharing, remote procedure calls, and messaging—have all been discussed. The desire for real-time information has given rise to event-driven architectures, in which data is made available as soon as it is available and published as events (in messages).

To know more about how EAI can assist in your goals, drop in a hello at info@eniquesolutions.com and we will get back to you.